In Airflow, a DAG is a collection of tasks with defined dependencies and. The SQLite database and default configuration for your Airflow deployment are initialized in the airflow directory. Lets talk about DAGs with respect to Airflow. In a production Airflow deployment, you would configure Airflow with a standard database. Unlike SubDAGs where you had to create a DAG, a TaskGroup is only a visual-grouping feature in the UI. Initialize a SQLite database that Airflow uses to track metadata. A TaskGroup is a collection of closely related tasks on the same DAG that should be grouped together when the DAG is displayed graphically. Airflow DAG is a collection of tasks organized in such a way that their relationships and dependencies are reflected.

Airflow uses the dags directory to store DAG definitions. Install Airflow and the Airflow Databricks provider packages.Ĭreate an airflow/dags directory. Initialize an environment variable named AIRFLOW_HOME set to the path of the airflow directory. The DAGs view provides you with a list of DAGs in your environment and a set of shortcuts to other built-in monitoring capabilities. This isolation helps reduce unexpected package version mismatches and code dependency collisions. Databricks recommends using a Python virtual environment to isolate package versions and code dependencies to that environment. Fawn Creek Township is situated nearby to the village Dearing and the hamlet Jefferson. It was tested with Airflow v2.3.2 (at latest. Fawn Creek Township is a locality in Kansas. We use Airflow’s internal behavior (which passes the dagid in args) for optimizing our loading time. Use pipenv to create and spawn a Python virtual environment. Photo by Veri Ivanova on Unsplash Observations. Pipenv install apache-airflow-providers-databricksĪirflow users create -username admin -firstname -lastname -role Admin -email you copy and run the script above, you perform these steps:Ĭreate a directory named airflow and change into that directory. Run tasks conditionally in a Databricks job.I would be very grateful, if you helped me fix it. Now I need to understand where I can create a dags folder where I would put all of my DAGs.

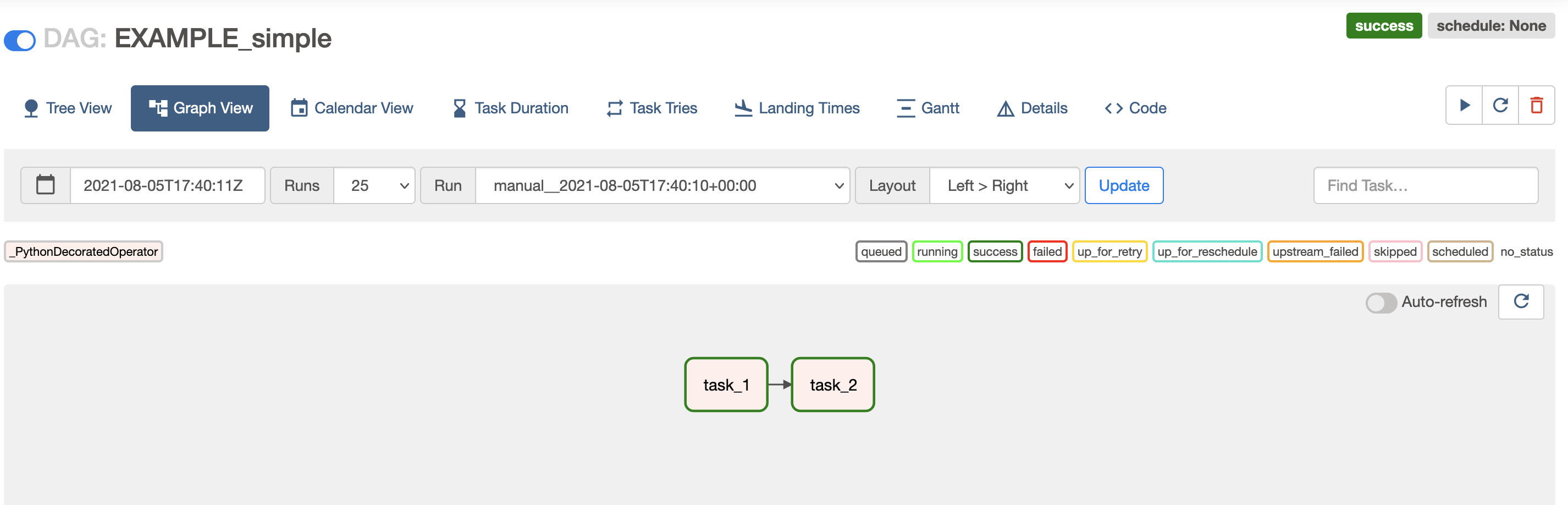

Pass context about job runs into job tasks None of these showed my SampleFile.py on Airflow webserver (I checked dagid in the file, it is alright).Share information between tasks in a Databricks job Building DAG Now, it’s time to build an Airflow DAG.As I said earlier, an Airflow DAG is a typical Python script which needs to be in the dagsfolder(This.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed